Data Reliability

Analytics You Can Trust at Scale

Monitoring hundreds of cameras continuously — so your data stays accurate even when the real world doesn't cooperate.

Computer vision analytics are only as reliable as the data they are built upon. The Digeiz platform combines video infrastructure monitoring, automated anomaly detection and data reconstruction processes to ensure that analytics remain accurate, consistent and operational at scale — across 5,662 cameras, with incidents detected in under 5 minutes and 98% data completeness. The reliability of this pipeline has been independently verified as part of a 2024 CESP audit.

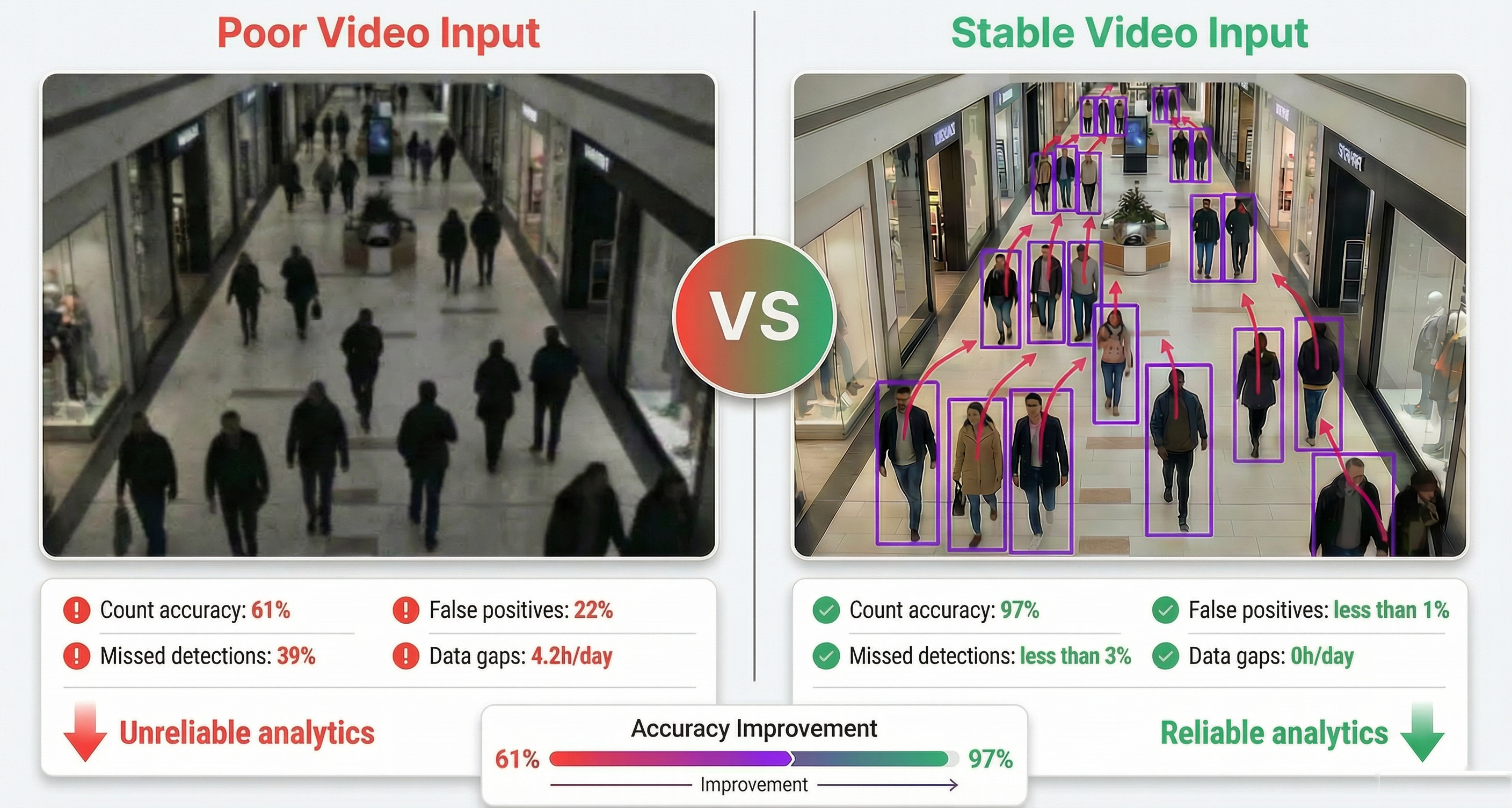

Why data reliability matters

Computer vision systems depend entirely on the quality of the visual data they receive. Even the most advanced AI models cannot produce reliable insights if the source video streams are degraded.

In large deployments involving hundreds of cameras, several factors can affect data quality:

- Camera movement or misalignment

- Zoom or configuration changes

- Partial obstructions

- Unstable video streams

- Degraded network conditions

Ensuring reliable analytics therefore requires continuous monitoring of both the video infrastructure and the generated data.

Video infrastructure monitoring

The Digeiz platform continuously monitors the health and stability of video infrastructures across deployments. Large installations may involve hundreds of cameras distributed across multiple areas and connected to multiple video management systems.

- Stability of the image frame

- Camera zoom or configuration changes

- Camera obstruction

- Availability of video streams

- Stability and latency of video feeds

- Image resolution consistency

- Camera availability across the deployment

- Server performance (CPU, GPU, memory)

- Network usage and connectivity

- Distributed processing infrastructure status

When anomalies are detected, alerts are generated to notify operators. This centralized monitoring provides full visibility over large camera networks and ensures that analytics pipelines continue to operate on reliable visual inputs.

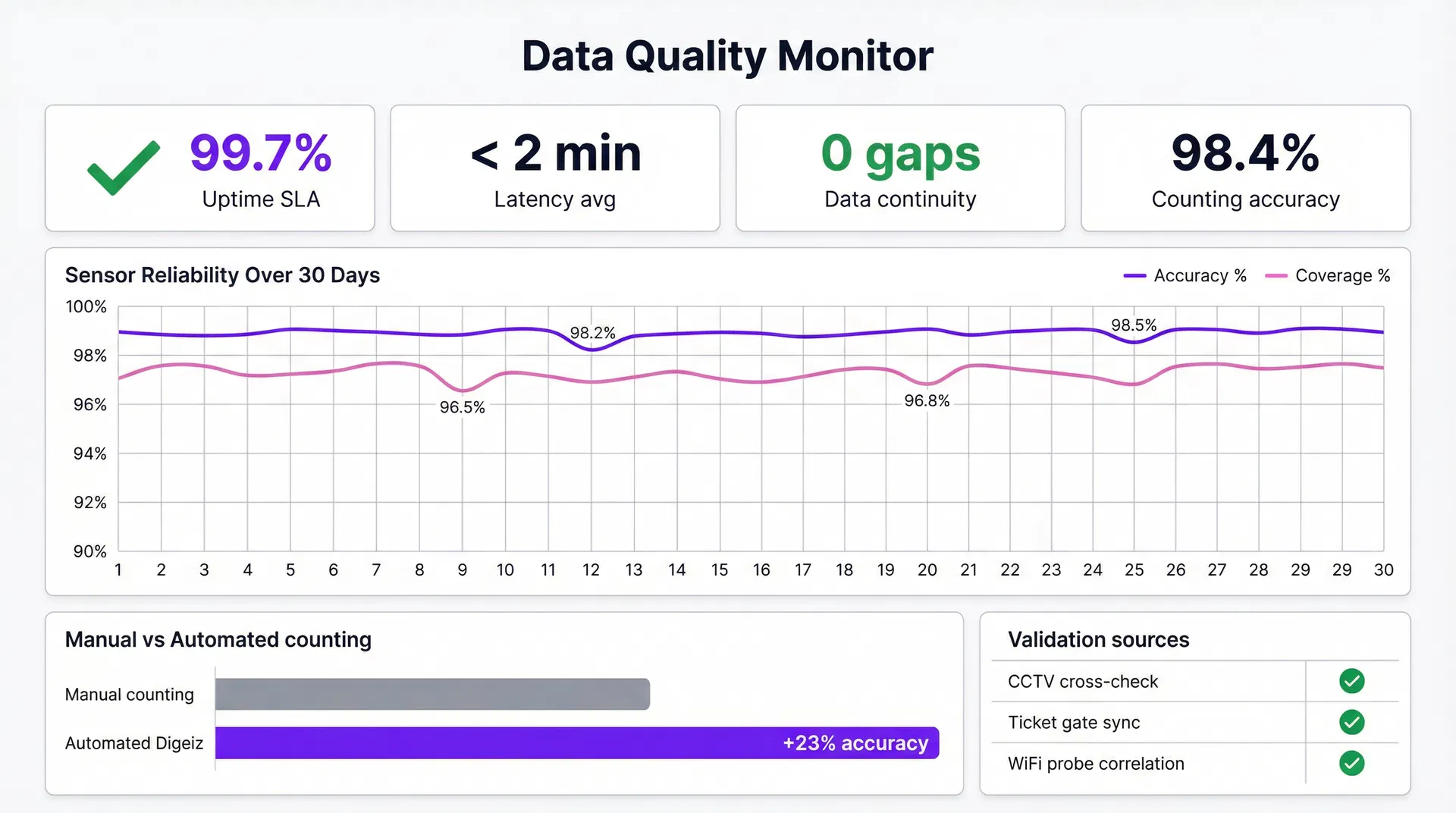

Data monitoring and quality assessment

Beyond monitoring video infrastructure, the platform continuously evaluates the quality of the analytics data produced by the system.

The data monitoring framework performs several functions:

- Monitoring key performance indicators

- Detecting abnormal data patterns

- Identifying missing data

- Verifying dataset completeness

Automated monitoring tools detect anomalies by comparing current metrics against historical patterns. When anomalies are detected, alerts are generated and reviewed to determine whether corrective actions are required.

Analytics metrics

Data reconstruction

In rare cases, data gaps may occur due to infrastructure incidents such as hardware failures, network outages or power interruptions. The platform includes mechanisms to automatically detect missing data and reconstruct datasets when necessary.

Data reconstruction relies on statistical models that leverage:

- Historical traffic patterns

- Correlations with related datasets

- Historical distribution of visits

The platform maps dependencies across all analytics pipelines and datasets. Monitoring tools continuously check for anomalies and missing records. When inconsistencies are detected, automated processes reconstruct the missing information while preserving the overall structure of the dataset. Incident logs and monitoring dashboards provide full transparency on reconstruction events and system status.

Data quality validation

Ensuring reliable analytics requires systematic validation of the generated datasets. Data quality assessment includes:

- Verifying accuracy of analytics outputs

- Confirming completeness of datasets

- Ensuring accessibility of historical data

This end-to-end quality process runs across 6 automated stages: camera intake and indexing, video stream quality monitoring, automated anomaly detection, alerting and ticketing, data reconstruction, and output quality checks. Each stage is automated and runs continuously across all deployed sites. Output accuracy is audited against manual ground truth at over 95% agreement.

At deployment completion, validation reports can be produced to document the quality of the data generated by the system. These processes ensure that analytics outputs remain reliable and interpretable for clients.

Build reliable analytics from video infrastructure

Discover how the Digeiz platform ensures the accuracy and reliability of audience analytics.